What you’ll learn: This guide covers the complete framework for source gap analysis in AI search: what it is, how AI selects sources, the four buckets of source performance, citation rate benchmarks, and a step-by-step process to identify and close gaps. No prior GEO experience required.

Key takeaways:

- Source gap analysis identifies prompts where AI cites competitors but not your brand

- Every piece of content falls into one of four buckets: not retrieved, retrieved but never cited, inconsistently cited, or frequently cited

- Bucket 3 (inconsistently cited) has the highest ROI for optimization

- Citation rate benchmarks differ by model: ChatGPT > 2.5, Google AI Mode > 1.2, Perplexity > 0.5

- Comprehensive listicles and comparison pages get cited more than single-topic content

- Manual analysis breaks down beyond 15-20 prompts. Automation is essential at scale

What Is Source Gap Analysis?

Source gap analysis is the process of identifying prompts and topics where AI models retrieve and cite your competitors’ content but not yours. It’s the GEO equivalent of a keyword gap analysis in traditional SEO, except instead of looking at search rankings, you’re looking at which URLs AI models choose to reference in their generated answers.

When someone asks ChatGPT “What is the best headless CMS for small business?” and competitors like Contentful or Storyblok are cited in the response but your brand isn’t, that’s a source gap.

There are actually two related types of gaps:

- Source gap (URL-level): Your specific URLs are not being retrieved or cited for relevant prompts

- Brand mention gap: Your brand name is absent from AI responses entirely, even when competitors are named

This guide focuses on source gaps, the URL-level analysis. Understanding these gaps is the foundation for improving your AI search visibility.

How AI Models Select Sources

Before diving into the analysis, it helps to understand how LLMs actually choose which sources to cite. The process differs from traditional search in fundamental ways.

When you ask an AI model a question, here’s what happens:

- Query expansion: The model generates multiple related search queries from your prompt (called “query fan-outs”)

- Source retrieval: It searches the web and collects the most relevant URLs for each query

- Quality evaluation: It evaluates each source based on authority, content relevance, freshness, and structure

- Source selection: It decides which retrieved URLs to actually read in full and potentially cite

- Answer synthesis: It compiles information from selected sources and generates a response with citations

The critical insight is that being retrieved is not the same as being cited. Your content might appear in step 2 but get filtered out at step 4. This is exactly what source gap analysis helps you uncover.

The Four Buckets of Source Performance

Every piece of content on your site falls into one of four performance buckets in AI search. Understanding which bucket your content is in determines what action you need to take.

| Bucket | Status | What It Means | Action Required |

|---|---|---|---|

| 1 | Not retrieved | AI doesn’t find your content at all | Fix SEO fundamentals. Your content isn’t showing up in web search results that AI models query |

| 2 | Retrieved, never cited | AI finds your content but never references it | Improve content quality, structure, and authority signals |

| 3 | Retrieved, inconsistently cited | AI sometimes cites you, but not reliably | Optimize for citability. This is where source gap analysis has the highest ROI |

| 4 | Retrieved, frequently cited | AI consistently cites your content | Maintain and expand. You’re doing well here |

Where to focus: Bucket 3 is the sweet spot for source gap analysis. Your content is already good enough for AI to consider it, but something specific is preventing consistent citations. That’s a solvable problem.

Why Bucket 3 matters most

Content in bucket 3 is already past the hardest hurdle: getting retrieved. The gap between “sometimes cited” and “consistently cited” often comes down to:

- Content structure: LLMs prefer clear headings, lists, and structured data they can easily parse

- Content format: Comprehensive listicles and comparison pages get cited more than single-topic reviews

- Freshness: Outdated content gets deprioritized, especially for “best of” and product queries

- Depth: Surface-level content loses to thorough, multi-faceted coverage

Understanding Citation Rate

Citation rate is the core metric for source gap analysis. It measures how often a retrieved URL is actually used (cited) in the AI’s response.

Citation rate = Number of times cited ÷ Number of times retrieved

A URL that gets retrieved for 10 prompts but only cited in 3 responses has a citation rate of 0.3.

Citation rate benchmarks by AI model

Each AI model cites content differently. Here are general benchmarks for strong citation performance:

| AI Model | Strong Citation Rate | Notes |

|---|---|---|

| ChatGPT | > 2.5 average | ChatGPT is generous with citations and often cites the same source multiple times |

| Google AI Mode | > 1.2 average | Moderately citation-friendly, tends to cite a focused set of sources |

| Perplexity | > 0.5 average | More conservative. Perplexity is selective about which sources it explicitly cites |

| Gemini | > 1.0 average | Varies significantly by query type |

Why the numbers differ: ChatGPT often retrieves 2-3 URLs from the same domain and may cite a source multiple times within one answer. Perplexity, in contrast, is more selective and may retrieve your URL but cite it sparingly. This is why tracking per-model performance matters.

What a low citation rate tells you

If your URLs are being retrieved but have a citation rate below these benchmarks, look for these common issues:

- Thin content: The page doesn’t contain enough substantive information for AI to extract

- Poor structure: Long text blocks without headings, lists, or tables make it hard for LLMs to parse

- Single-product focus: AI models prefer pages covering multiple items (listicles, roundups) over individual product reviews

- Missing summary: No TL;DR or key takeaways section for AI to quickly extract findings

- Outdated information: Dates, statistics, or product details that are clearly stale

Step-by-Step: Running Your First Source Gap Analysis

Step 1: Define Your Prompt Universe

Start by identifying the prompts and topics most important to your business. These are the queries where you want AI to cite your brand.

Organize them by intent, as different intent types require different content strategies:

| Intent Type | Example Prompts | Why It Matters |

|---|---|---|

| Branded | ”What is ClayHog?”, “ClayHog reviews” | Protects and controls your brand narrative |

| Informational | ”What is GEO?”, “How does AI search work?” | Builds authority and brand awareness |

| Navigational | ”How to set up [tool]”, “[Brand] features” | Captures users looking for specific pages |

| Commercial | ”Best headless CMS for enterprise”, “Top CMS platforms 2026” | Directly influences purchase decisions |

| Transactional | ”Contentful vs Storyblok”, “[Product] pricing” | Captures high-intent users ready to buy |

Pro tip: Tag your prompts by intent from the start. When you analyze citation data later, filtering by commercial and transactional intent will show you the gaps that directly impact revenue.

Aim for 30-50 prompts to start. Cover your core product categories, competitor comparison queries, and the informational topics you want to own. For a deeper dive on discovering prompts, see our guide on how to use topic research for GEO.

Step 2: Track Sources Across AI Models

For each prompt, you need to record two things across every AI platform:

- Which URLs were retrieved (sources the model considered)

- Which URLs were actually cited (sources used in the answer)

Query the same prompts across:

- ChatGPT (GPT-4)

- Perplexity

- Google Gemini

This is where the manual approach becomes painful fast. With 40 prompts across 4 platforms, you’re looking at 160 queries, and you’d need to repeat this regularly.

Step 3: Map Competitor Domain Performance

Before looking at individual URLs, zoom out to the domain level. Create a competitor matrix showing:

| Domain | Times Retrieved | Times Cited | Citation Rate | Top Content Type |

|---|---|---|---|---|

| contentful.com | 85 | 62 | 0.73 | Listicles, comparisons |

| storyblok.com | 64 | 28 | 0.44 | Guides, docs |

| yourbrand.com | 42 | 12 | 0.29 | Blog posts |

| reddit.com | 120 | 38 | 0.32 | Discussion threads |

This view tells you:

- Who your real AI search competitors are (they may differ from your traditional SEO competitors)

- Which domains AI models trust most for your topic area

- Whether your issue is retrieval (not found) or citation (found but not used)

Step 4: Identify Low-Citation URLs

Now drill into your own domain’s URLs. Sort by citation rate to find content in bucket 3, retrieved but inconsistently cited.

Look for patterns:

- What content types have low citation rates? (e.g., individual product pages vs. comparison pages)

- What structural elements are missing? (e.g., no headings, no tables, no summary)

- How does content length compare? Longer, more comprehensive content tends to get cited more

- Are there freshness issues? Check publication and modification dates

Step 5: Study High-Citation Content

Now look at the other end: your URLs with the highest citation rates. These are your templates for success.

Common patterns in highly-cited content:

- Listicles and roundups: “Top 10 X”, “Best Y for Z” format performs consistently well because AI can extract multiple data points from a single source

- Comparison tables: Side-by-side feature comparisons in table format are easy for LLMs to parse

- Structured guides: Clear H2/H3 hierarchy with concise paragraphs under each heading

- Fresh content: Recently published or updated content with clear dates

- Original data: Statistics, benchmarks, or research findings unique to your brand

Step 6: Prioritize and Act

Not all gaps are worth closing. Prioritize based on:

- Revenue impact: Commercial and transactional intent prompts first

- Gap size: How many competitors are being cited where you aren’t?

- Effort required: Can you restructure existing content, or do you need to create from scratch?

- Quick wins: Content already in bucket 3 that just needs formatting and structural improvements

Content Strategies That Close Source Gaps

Restructure Existing Content

The fastest wins come from improving content you already have:

- Add clear H2/H3 headings that match how people phrase prompts

- Add a summary section at the top of every article with key findings

- Convert long paragraphs into structured lists and tables

- Add comparison elements. Even a simple “Pros/Cons” section improves citability

- Update dates and statistics to signal freshness

Create Comprehensive Listicles

AI models consistently favor multi-item content over single-topic pages. If your site has individual product reviews:

- Keep the individual reviews (they serve SEO purposes)

- Create hub pages that compile key findings, like “Top 10 Headless CMS Platforms in 2026”

- Link from the hub to individual reviews and vice versa

- Ensure the hub page has structured comparison tables

Build Content Clusters

Don’t just create isolated articles. Build interconnected content around your target topics:

- Pillar page: Comprehensive overview of the broad topic

- Supporting guides: Deep dives into subtopics

- Comparison pages: Head-to-head evaluations

- FAQ content: Address common questions with clear, concise answers

- Internal links: Connect everything together to signal topical authority

Optimize for Citability

Make your content as easy as possible for AI to extract and reference:

- Use definition-style formatting like “X is…” sentences that AI can quote directly

- Include numbered lists for step-by-step processes

- Add data tables with clear headers

- Include author credentials and E-E-A-T signals

- Use schema markup where appropriate

Earn External Authority

AI models weight sources that are cited by other authoritative content:

- Publish original research and data that others will reference

- Contribute to industry publications to build brand authority

- Create shareable tools and calculators that earn natural backlinks

- Get cited in existing high-authority listicles in your space

For more on how domain-level authority affects your chances of being cited, read our guide to domain signals and AI citations.

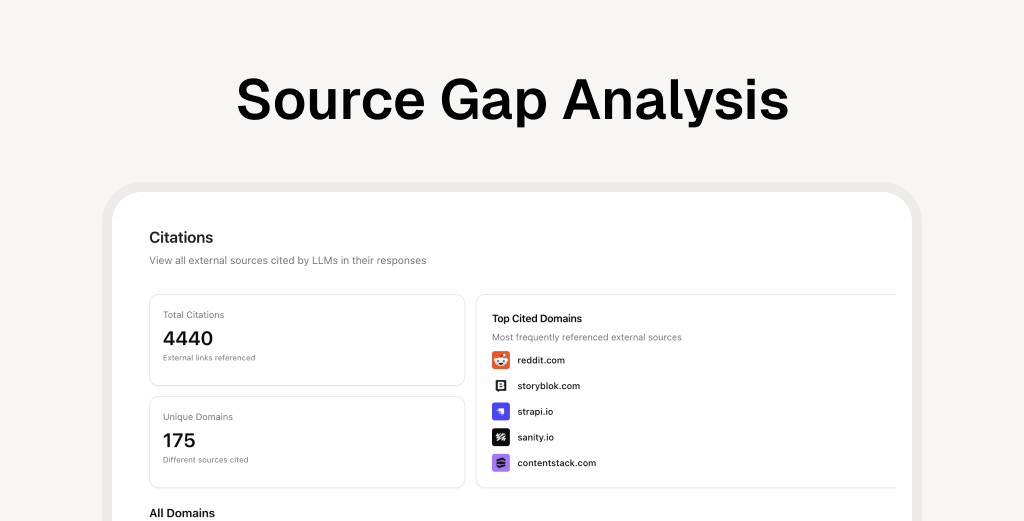

Automating Source Gap Analysis with ClayHog

Manual source gap analysis works for an initial audit, but it doesn’t scale. With 40+ prompts across 4 AI platforms, manual tracking means 160+ queries that need regular re-runs. Citation rates shift as AI models update, competitors publish new content, and your own content changes.

ClayHog automates the entire workflow:

- Continuous prompt tracking across ChatGPT, Gemini, Perplexity, Claude, and Google AI Overviews

- Automatic citation monitoring that surfaces which URLs are being retrieved and cited, and which aren’t

- Competitor citation tracking so you can see exactly where competitors appear and you don’t

- Brand Visibility Score that trends over time so you can measure the impact of your optimizations

- Content creator that generates optimized content based on your specific source gaps

Instead of manually querying AI platforms every week, ClayHog continuously monitors and alerts you to new gaps and opportunities, letting you focus on creating the content that closes them.

Frequently Asked Questions

What is source gap analysis in AI search?

Source gap analysis is the process of identifying prompts and topics where AI models cite your competitors but not your brand. It reveals content you need to create or improve so that AI platforms like ChatGPT, Gemini, Perplexity, Claude, and Google AI Overviews reference your website in their responses.

What is a good citation rate in AI search?

Citation rate benchmarks vary by model. For ChatGPT, an average citation rate above 2.5 is strong. For Google AI Mode, above 1.2 is good. Perplexity is more conservative, and an average above 0.5 indicates strong performance. These benchmarks are model-specific because each AI platform handles citations differently.

What is the difference between a source gap and a brand mention gap?

A source gap means your URL is not being retrieved or cited by AI models for relevant queries. A brand mention gap means your brand name is absent from AI responses even when competitors are mentioned. Source gaps are URL-level and tell you which specific pages need optimization. Brand mention gaps are entity-level and indicate broader brand authority issues.

How often should I run a source gap analysis?

At minimum monthly, but ideally continuously with automated tracking. AI models update their responses frequently, and competitor content changes can shift citation patterns within days. Manual monthly audits catch the big trends, but automated tools like ClayHog provide real-time visibility.

Do I need a tool to do source gap analysis?

You can start manually by querying AI platforms and recording results in a spreadsheet. But manual analysis becomes impractical beyond 15-20 prompts. A GEO platform like ClayHog automates tracking across multiple AI platforms, monitors citation rates over time, and surfaces gaps automatically.

How long does it take to close a source gap?

It depends on the type of gap. Structural improvements to existing content (better headings, tables, summaries) can show results within weeks as AI models re-crawl your pages. Creating new content from scratch typically takes 1-3 months to gain traction. The key is consistent tracking so you can measure what’s working.

What’s the difference between a source gap and a keyword gap?

A keyword gap identifies search terms where competitors rank in traditional Google search but you don’t. A source gap identifies prompts where AI models cite competitors’ content in their generated responses but don’t cite yours. Both are valuable, but they require different strategies. Keyword gaps need traditional SEO, while source gaps need GEO-specific content optimization.

Why does the same content perform differently across AI models?

Each AI model has its own retrieval pipeline, ranking signals, and citation behavior. ChatGPT tends to be citation-generous, while Perplexity is more selective. Google AI uses its own search index. This is why tracking performance per-model is important. A gap in ChatGPT doesn’t necessarily mean a gap in Perplexity.