You fixed your SEO. You publish content weekly. Your domain authority is solid. But when you test prompts in ChatGPT, Gemini, Perplexity, Claude, and Google AI Overviews, your competitors get cited and you don’t.

The gap isn’t always about doing more work. Sometimes it’s about fixing what’s already broken. A blocked robots.txt file costs you 100% of your AI visibility. Tracking only branded prompts means you miss 90% of the opportunity. Publishing content once and never updating it loses you citations to competitors who refresh theirs monthly.

This guide covers the 7 most common GEO mistakes that kill AI citation rates, and the specific fix for each one.

What you’ll learn: The most damaging mistakes brands make in GEO, why each one prevents AI citations, and exactly what to do to fix them. Each mistake includes the impact level and how long the fix takes.

Key takeaways:

- Blocked AI crawlers are the #1 citation killer, and the fix takes 2 minutes

- Tracking only branded prompts means missing where users actually discover new brands

- Content that buries the answer loses citations to content that leads with it

- Weak domain authority blocks citations before content quality even matters

- Publishing content once without updates loses you citations within 60-90 days

- Ignoring sentiment means you might be mentioned but described negatively

- Not tracking competitors means you optimize blindly while they pull ahead

Mistake 1: Blocking AI Crawlers in Your robots.txt

Impact: Critical (100% visibility loss)

Fix time: 2 minutes

How common: 30-40% of sites block at least one AI crawler

If your robots.txt blocks AI crawlers like GPTBot, ClaudeBot, or PerplexityBot, your content is invisible to those platforms. No amount of content optimization will matter if the door is locked.

Most sites that block AI crawlers didn’t do it intentionally. The blocks come from blanket bot-blocking rules, Cloudflare bot protection, default CMS settings, or legal concerns about AI training. Y Combinator’s Garry Tan discovered in late 2025 that Cloudflare had blocked all AI crawlers on *.ycombinator.com without permission. If it happened to YC, it’s worth checking your site.

The fix takes 2 minutes: Go to yoursite.com/robots.txt and check whether GPTBot, ClaudeBot, PerplexityBot, and Google-Extended are allowed. If any are blocked, add Allow: / rules for each. Use our free GEO audit tool to see exactly which AI crawlers are blocked on your site. For the full list of crawlers to check and the exact code to add, see Step 1 in our brand mentions guide.

Don’t stop at robots.txt. Also check whether Cloudflare bot protection, JavaScript rendering, or paywalls are blocking crawlers indirectly.

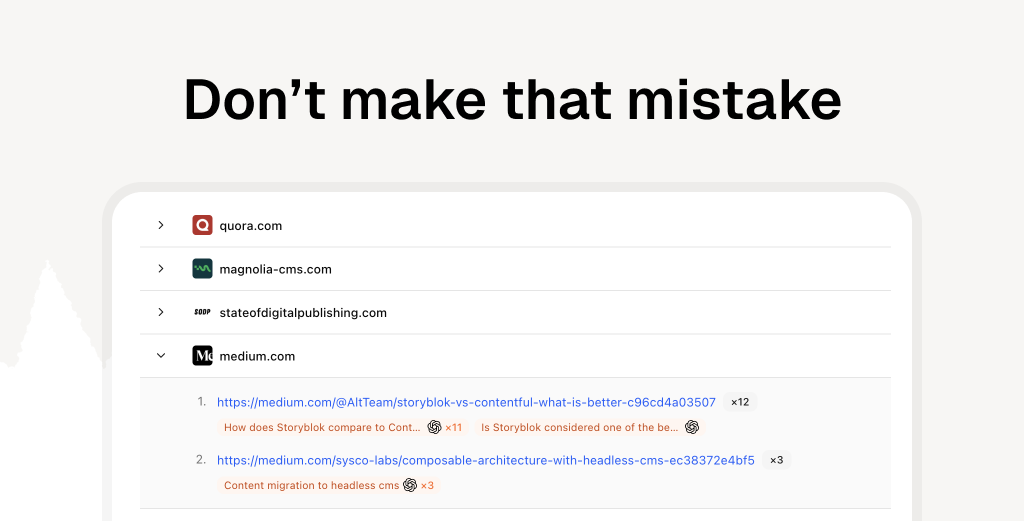

Mistake 2: Only Tracking Branded Prompts

Impact: High (missing 90% of opportunity)

Fix time: 30 minutes

How common: 70%+ of brands only track branded queries

Most brands start GEO by testing prompts like “What is [your brand]?” or “Tell me about [your product].” These prompts matter, but they only show you what AI says to people who already know your name. The real opportunity is in the category prompts where users discover brands for the first time.

The problem with brand-only tracking

Branded prompts tell you:

- Whether AI knows about you

- How AI describes you

- What sources AI cites when asked about you directly

But they don’t tell you:

- Whether you appear when users search your category

- How you compare to competitors in category prompts

- Which use cases trigger your competitors but not you

- Where the actual brand discovery happens

What to track instead

Add these prompt types to your tracking:

Category prompts (highest priority):

- “Best [your category] tools in 2026”

- “What’s the best [category] for [use case]?”

- “Top [category] platforms for [audience]”

Use case prompts:

- “How do I [problem you solve]?”

- “[Your category] for [specific need]”

- “Tool to help with [pain point]”

Competitor comparison prompts:

- “[Your brand] vs [competitor]”

- “Alternatives to [competitor]”

- “Best [competitor] alternative”

Problem-solution prompts:

- “Why is [problem] happening?”

- “How to fix [common issue]”

- “What causes [challenge your product solves]?”

The 80/20 prompt mix

For a balanced view of your AI visibility:

- 20% branded prompts (your brand name)

- 40% category prompts (discovery queries)

- 20% use case prompts (problem-solution)

- 20% competitor prompts (comparison and alternatives)

This distribution shows you both how AI describes you directly and whether you appear where customers actually discover new brands.

Start with 20 prompts: 4 branded, 8 category, 4 use case, 4 competitor. This gives you a complete baseline without overwhelming data. Add more as you identify gaps.

Mistake 3: Burying Your Answers in Dense Content

Impact: High (content skipped by AI)

Fix time: 1-2 hours per article

How common: 60%+ of content

AI models process hundreds of pages per query. They don’t read every word. If a 2,000-word article takes 6 paragraphs to answer the title question, AI skips it for a competitor’s article that answers it in the first sentence.

The symptoms

Your content ranks well in Google but doesn’t get cited by AI. The article is comprehensive and accurate, but the answer is buried under context, background, and qualifications. AI needs answers up front, not at the bottom.

How to diagnose this

Open any of your guides. Could someone skim just the headings and first sentence of each section and get the complete answer? If not, AI probably can’t extract it either.

Check these specifically:

- Does each H2 heading mirror a real question users ask?

- Is the answer in the first 100 words of each section?

- Are comparisons in table format or buried in paragraphs?

- Is there an FAQ section that maps to common AI prompts?

The fix

Restructure without rewriting. Move the answer to the first sentence of each section. Convert label headings (“Pricing Structure”) to question headings (“How Much Does It Cost?”). Add comparison tables and FAQ sections.

For a detailed before/after walkthrough and step-by-step optimization checklist, see how to get your brand mentioned in AI answers (Step 4). For the quality signals that affect whether AI trusts your content enough to cite it, see how E-E-A-T signals affect citation rates.

Mistake 4: Ignoring Domain Authority

Impact: Critical (blocks citations before content matters)

Fix time: 3-6 months

How common: Common for newer sites

Content quality matters, but domain authority is the gatekeeper. In traditional SEO, a well-optimized page on a DR 25 site can rank for long-tail keywords. AI search is different. AI models use domain authority as a first-pass filter during retrieval. If your domain doesn’t meet the threshold, your content doesn’t get considered, regardless of quality.

Why this is a mistake, not just a limitation

Many brands skip domain building and jump straight to content optimization. They publish 20 AI-optimized articles on a DR 20 site and wonder why nothing gets cited. The articles might be excellent, but AI never even sees them.

BrightEdge research shows 50% of AI-cited sources also rank in Google’s top 10. Sites with DR below 30 rarely get cited. You don’t need to be Wikipedia, but you need to be on the radar.

How to check if this is your problem

Use Ahrefs or SEMrush to check your Domain Rating. If you’re below DR 30, domain building is your first priority, before content optimization, before prompt tracking, before anything else. Budget 3-6 months for meaningful movement.

For the full breakdown of DR thresholds, backlink strategies, and how domain signals affect each stage of the AI citation pipeline, see our domain signals and AI citations guide.

The sequence matters. If DR is below 30, invest in link building first. If DR is 30-40, work on content optimization alongside link building. If DR is 40+, content optimization will have the highest immediate impact.

Mistake 5: Publishing Once and Never Updating

Impact: High (citations decay over time)

Fix time: 1 hour per article per quarter

How common: 80%+ of published content

You publish a comprehensive guide. It earns citations for 2 months. Then a competitor publishes a fresher version, and your citations drop. Within 6 months, you’re not cited at all, even though your content is still accurate.

Why recency kills stale content

AI platforms use modification dates as quality signals. Perplexity shows dates directly next to citations. ChatGPT with browsing prioritizes recently updated content. Gemini factors recency into retrieval ranking. An outdated timestamp signals stale information, even when the content is correct.

This creates a treadmill: you need to keep content fresh to maintain citations you’ve already earned. Stop updating and competitors who do update will take your spot.

How to tell if this is hurting you

Check the dateModified on your top 10 pages. If any are older than 6 months, they’re losing citations to fresher competitors. Look for year references (“in 2024”) that signal outdated content to both AI and readers.

The minimum viable update

You don’t need full rewrites. A 30-minute quarterly refresh works: update statistics, replace outdated examples, fix broken links, change the dateModified field, and republish. Set calendar reminders so this doesn’t fall through the cracks.

For a detailed refresh checklist and 10-day update schedule, see Tactic 5 in our visibility score guide.

Mistake 6: Not Monitoring Sentiment

Impact: Medium-High (negative mentions worse than none)

Fix time: Ongoing monitoring

How common: 50%+ of brands don’t track

Being mentioned by AI isn’t always good. If ChatGPT says “While [Your Brand] offers good features, users frequently report reliability issues and slow support,” that mention is actively hurting you. Yet most brands only track whether they’re mentioned, not how.

What negative sentiment looks like

Explicit negative framing:

- “However, users report…”

- “Some customers complain about…”

- “Known for expensive pricing…”

- “Limited feature set compared to…”

Damning with faint praise:

- “[Competitor] is better for most teams, but [Your Brand] works for basic needs”

- “While functional, it lacks the polish of…”

- “A budget option if cost is your main concern”

Buried mentions:

- Listed last in recommendations

- Mentioned only in comparison to better options

- Included with heavy qualifications

Where negative sentiment comes from

AI models synthesize sentiment from multiple sources:

- Reddit threads complaining about your product

- Negative reviews on G2, Capterra, Trustpilot

- Support forum posts about problems

- Tweets and social media criticism

- Outdated information about old issues

Even if you fixed the problem, if the complaints still rank in Google, AI cites them.

How to track sentiment

Manual approach:

- Test your prompts weekly

- Read the full AI responses

- Note positive, neutral, and negative framing

- Track changes over time

Automated approach:

- GEO tools like ClayHog score sentiment automatically

- Track sentiment per prompt, per platform

- Get alerts when sentiment shifts negative

- Compare your sentiment to competitors

How to fix negative sentiment

Address the source:

- Find the pages AI cites that mention your brand negatively

- If it’s accurate, fix the underlying problem and publish updates

- If it’s outdated, publish fresh content about how you fixed it

- If it’s a review site, respond to reviews professionally

Outweigh with positive signals:

- Publish case studies with customer results

- Get more recent positive reviews

- Create content addressing the concerns directly

- Build Reddit and forum presence showing helpful support

Proactive reputation management:

- Monitor brand mentions across Reddit, Twitter, forums

- Respond to criticism quickly and professionally

- Turn detractors into updated positive testimonials

- Create content that preemptively addresses objections

Sentiment matters as much as visibility. Better to not be mentioned than to be recommended with heavy negative qualifications.

Mistake 7: Not Tracking Competitors

Impact: Medium (optimizing blindly)

Fix time: 1 hour setup, ongoing monitoring

How common: 60%+ track themselves only

You optimize your content, build links, and fix technical issues. Your AI visibility improves slightly. But your competitors are doing the same work, and you have no idea whether you’re gaining ground or falling further behind.

Without competitor tracking, you’re optimizing in a vacuum.

What competitor tracking reveals

Citation gaps: Prompts where competitors get cited and you don’t. These are your highest-priority content targets. If AI recommends 3 competitors for “best CRM for startups” and you’re not on the list, that’s a specific, actionable gap.

Share of voice: What percentage of category mentions go to each brand? If you’re at 8% and your competitor is at 35%, you know how far behind you are and can set realistic goals.

Competitor content strategy:

- Which pages competitors get cited for

- What format their content uses

- How they structure information

- What sources they reference

Sentiment comparison: How does AI describe you vs. competitors? If AI says “[Competitor] is best for most teams” but frames you as “budget option,” you have a positioning problem.

Emerging competitors: Brands you didn’t consider competitors start appearing in AI responses. Early warning of market shifts.

Who to track

Don’t track everyone. Start with:

- 3 direct competitors (same category, similar size)

- 1 aspirational competitor (larger brand you’re chasing)

- 1 emerging competitor (newer brand growing fast)

What to monitor

Track the same core prompts for your competitors:

- Do they appear on category prompts you’re targeting?

- How are they described vs. you?

- Which sources cite them?

- What’s their sentiment trend?

- Are they gaining or losing mentions?

How to use competitor data

Find quick wins: Competitor gets cited on a prompt, but the cited source is a low-authority page (DR 30). You can create better content and earn that citation.

Understand positioning: If AI consistently frames a competitor as “best for startups” and you as “enterprise-focused,” you either lean into that positioning or publish content that shifts it.

Benchmark progress: “We increased from 5% share of voice to 12%” is more meaningful than “We got 3 new citations this month.”

Prioritize outreach: The sources citing your competitors most frequently are your highest-value outreach targets for source gap analysis.

ClayHog’s competitor tracking lets you monitor up to 5 competitors per prompt across ChatGPT, Gemini, Perplexity, Claude, and Google AI Overviews. See exactly where they appear, how they’re described, and which citations you’re competing for.

How to Audit for These Mistakes (15-Minute Checklist)

Run through this checklist to find your biggest gaps:

Crawl access (2 minutes):

- Check

yoursite.com/robots.txtfor AI crawler blocks - Verify no Cloudflare or firewall rules blocking bots

Prompt coverage (3 minutes):

- List your current tracked prompts

- Confirm you’re tracking category and use case prompts, not just branded

Content structure (5 minutes):

- Open your top 3 guides

- Check if answers are in first 100 words

- Verify headings are questions, not labels

Domain authority (2 minutes):

- Check your DR/DA in Ahrefs or SEMrush

- Note if you’re below 30 (critical issue)

Content freshness (2 minutes):

- Check

dateModifiedon your top 10 pages - Note any older than 6 months (update needed)

Sentiment (1 minute):

- Test 3 category prompts manually

- Note if AI frames you negatively or with qualifications

Competitor tracking (1 minute):

- List your tracked competitors

- If none, add 3 competitors to track

Every unchecked item is costing you citations. For a step-by-step plan to fix these gaps, see 5 ways to improve your brand visibility score in 30 days.

Your 30-Day Fix Plan

Week 1: Technical fixes

- Fix robots.txt (Day 1)

- Check Cloudflare/firewall settings (Day 1)

- Audit domain authority, start link building (Day 2-7)

Week 2: Prompt expansion

- Add 10 category prompts (Day 8)

- Add 5 use case prompts (Day 9)

- Add 5 competitor prompts (Day 10)

- Set up tracking (Day 11-14)

Week 3: Content restructuring

- Update your top 5 guides with direct answers (Day 15-19)

- Add FAQ sections to each (Day 20-21)

Week 4: Monitoring and maintenance

- Set up competitor tracking (Day 22)

- Schedule quarterly content updates (Day 23)

- Review sentiment on all tracked prompts (Day 24-28)

- Document baseline metrics for comparison (Day 29-30)

Frequently Asked Questions

What is the biggest GEO mistake brands make?

Blocking AI crawlers in robots.txt is the single biggest mistake. If GPTBot, ClaudeBot, or PerplexityBot can’t access your site, those platforms cannot cite your content regardless of quality. Check yoursite.com/robots.txt right now. This is a 2-minute fix.

Why isn’t AI citing my content even though I rank well in Google?

AI needs content structured for extraction. Even if you rank #1 in Google, if your answer is buried deep in the article with unclear headings, AI models skip it for content that leads with the answer. Restructure to answer questions in the first 100 words.

How long does it take to fix these mistakes and see results?

Technical fixes (robots.txt, content restructuring) can show results in 1-4 weeks for sites with existing authority. Domain authority improvements and consistent content optimization take 2-3 months to impact citation rates meaningfully.

Can I recover from blocking AI crawlers for months?

Yes. Once you allow AI crawlers, platforms will recrawl your content on their next cycle. For sites with good authority, citations can appear within 2-4 weeks after fixing access. The damage isn’t permanent, but every day blocked is lost opportunity.

Should I track branded or category prompts first?

Track both, but prioritize category prompts. Branded prompts show what AI says when users already know your name. Category prompts (like “best CRM for small teams”) show where new customers discover brands. That’s where the opportunity is.

How do I know if my domain authority is too low for AI citations?

Use Ahrefs or SEMrush to check your Domain Rating. DR below 30 rarely gets AI citations. DR 30-40 is moderate (citations for niche queries). DR 40+ is competitive. If you’re below 30, prioritizing link building is critical before content optimization.

How often should I update content to maintain citations?

Quarterly updates (every 3 months) are the minimum. Update statistics, refresh examples, and change the dateModified date. Annual rewrites for major pieces. AI favors recency, so consistent updates maintain citation rates.